This tactic is simple, and means exactly what it says. If you’re familiar with design sprints, then you understand the value of validating a prototype as quickly as possible.

At the outset of any project, we’re always staring down significantly more questions than we can answer. That’s the normal order of things, but it encourages a tendency to want to answer them all before doing anything.

The way to get clarity is to start working, start prototyping. For the teams I’ve worked with, the 48-hour rule usually plays out like this.

Days 1 and 2: Contextual Use Scenarios

We start with stakeholders, naturally. They’re usually immediately accessible and are also itching to voice their concerns.

Our first order of business is to get the lay of the internal land, politically as well as tactically. We want to find out where each stakeholder is coming from, what they expect to happen and where their goals conflict with other departments. We also want to know what happens to each person’s world if the project succeeds or fails.

The first 48 hours of the project is spent in meetings with stakeholders and users; each session focuses on a specific user group or a specific department within the organization. If the product in question is used by employees, getting these folks to the table is fairly simple. If end-users belong to a customer, however, doing this may be a bit more challenging, as we discussed earlier. In both cases, we have anywhere from 6 to 12 people gathered around a table.

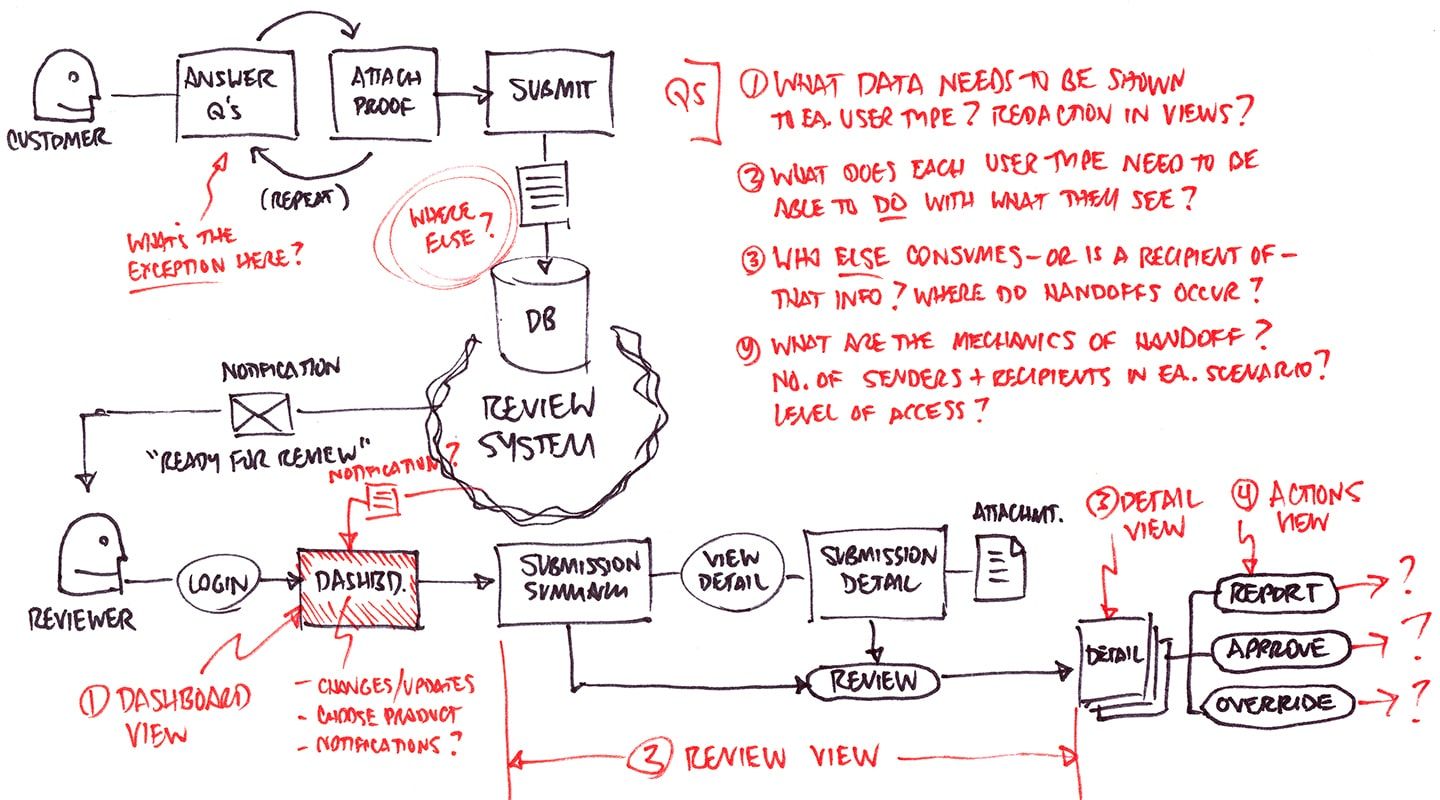

The day is spent walking through how people use the system now (if we’re redesigning or updating) or how we believe they will use the new system (if it’s a completely new product). In addition, we’re diagramming the process as discussion occurs. The only tools necessary are a whiteboard and a table with a lot of seats. Boxes, arrows and questions, most of which consist of simply asking ”why?” The drawing is what turns this into a working meeting instead of a verbal sparring match.

The sessions work like this:

1. We ask a user (or stakeholder/IT Manager/Account Rep) to walk us through their daily work processes verbally: “On any given day, how do you do your work?”

2. If there are multiple tasks, activities, and processes, we go through each one. As they talk, we draw people, boxes, and arrows on a whiteboard that describe what happens, which usually looks something like this:

3. The only deliverable from these meetings is a photograph of the whiteboard, shared with all involved. The core process is usually in black marker, with problem areas and related issues or questions in red or orange. The color separation allows us to focus on those areas quickly when we refer to it later.

The visual representation helps everyone get to clarity much quicker than if they had to imagine it in their heads. It also gives the dev team the ability to confirm and correct as we go: “does it work like this?”

For now, forget formal use cases, forget formal diagramming methodologies and rules. Just draw it out and label who the players are and what they’re doing. As you draw, you ask questions:

- What should happen here, and what actually happens?

- What happens (or should) next?

- What can (or should) the next person in the process do with what they have?

The whiteboarding exercise is a very simple way of getting a baseline for what gets created, what gets acted upon, and how it all moves through any particular process. We’re focusing on the people, their goals and how they do what they do every day.

When you’re done, it’s worth photographing the whiteboard for later reference.

At this point you may be wondering, “When does in-depth user research happen?” The short answer: later. While it’s possible to work preliminary user interviews into the first 48 hour period, these are cursory shots across the bow: short, 15-minute conversations to get a baseline for who does what with the tool at hand. But if that doesn’t happen at this point, that’s OK — and here’s why.

Some enterprise organizations are still reluctant to devote significant effort to user research and testing. We’ve know we’re short on time and resources, so we need to make every every activity and decision count.

So instead of spending a lot of time with users up front, we’ll break up our research into chunks, so (a) we’re not committing large blocks of time to this at the expense of iterating design or development, and (b) we’re creating a low-fidelity prototype to put in front of users, instead of asking them to imagine using it.

Day 3: Prototyping, Information and Action

While screen layout and interaction mechanics are legitimate concerns, the bigger fish here is the volume — and clear separation — of information and action.

Starting the prototype process early in a collaborative platform helps you come up with ways to separate the two as well. That matters a great deal, because this separation is the foundation of good UX and sound interaction design. Keep two things distinct:

- Things the user needs to know, and

- Things the user needs to be able to do.

Getting a handle on the relative importance of both is where you focus your effort. The latter is up-front and center; the former accessible but tucked out of the way and used only when needed — and in such a way that invoking it doesn’t obscure or compete with the user action.

You use the prototyping process to answer the questions that have arisen over the past two days of strategy discussions:

- How much content and/or data do we need to expose?

- How many different types or formats do we have (e.g. raw data, text, audio, video, etc.)?

- How much of the data is static vs. interactive?

- Where is it coming from — how many sources or systems?

- How will people get to all of it?

- How should it be organized, categorized and labeled?

- What matters most to users, and in what order of priority?

- How might we stand it up in the interface?

- What interaction patterns match what the user needs or expects?

- Is each pattern appropriate for the volume and type of content they’re manipulating or viewing?

You and I both know how easy it would be to spend weeks trying to formulate answers to these questions. But we also know that isn’t feasible, because we have neither the time nor the insight necessary to do so.

Instead, we quickly work towards a model that captures the volume of content and represents the ideal separation of that content from the mechanisms and controls. We’re working quickly to iterate and explore data types, navigation, labeling and interaction patterns. We’re socializing each iteration with our users, with the IT Managers or Account Reps responsible for helping them and/or with our stakeholders. We keep what works and throw out what doesn’t. Try, evaluate, revise.

We’ll use this artifact to guide everything that follows. We will absolutely revisit all of these questions as we test the prototypes with users, but we don’t wait for clarity before starting our prototype. We need to put something in front of people as soon as humanly possible to determine whether or not we’re on the right path.

For more practical advice, download the 91-page e-book Fixing the Enterprise UX Process by Joe Natoli.